The Leverage Divide: Who Rises and Who Slips in the Age of AI

AI affects jobs in different ways. Some positions gain more authority and influence, while others slowly lose their importance. The key difference is not intelligence, effort, or pay. It is leverage.

Recently, I have heard people describe the future of work in dramatic terms. They say companies will soon have to choose between ‘expensive but productive AI’ and ‘cheap but slow humans.’ This idea is presented like a simple business decision: replace payroll with computers, swap salaries for hardware, and focus on efficiency.

This argument sounds convincing because it turns a complicated change into a simple choice. But the real situation is less dramatic. The best AI systems are not cheap. Advanced models use a lot of computing power. AI agents run many processes, use tools, and take time to reach results. Data centers and energy are limited. The most powerful AI is also often expensive to run. So, the choice is not just ‘AI is cheaper than humans.’ In many cases, it is ‘AI is more powerful, but not always cheaper.’

The real issue is not simply swapping expensive people for cheaper machines. It is about leverage. AI changes how much work one person can do, how much complexity a role can handle, and how much authority is needed for decisions.

So, instead of asking if AI will replace people, we should ask: which roles gain leverage as AI gets better, and which ones lose it?

The Real Situation: Human LeverageHuman LeverageHuman Leverage Quadrant

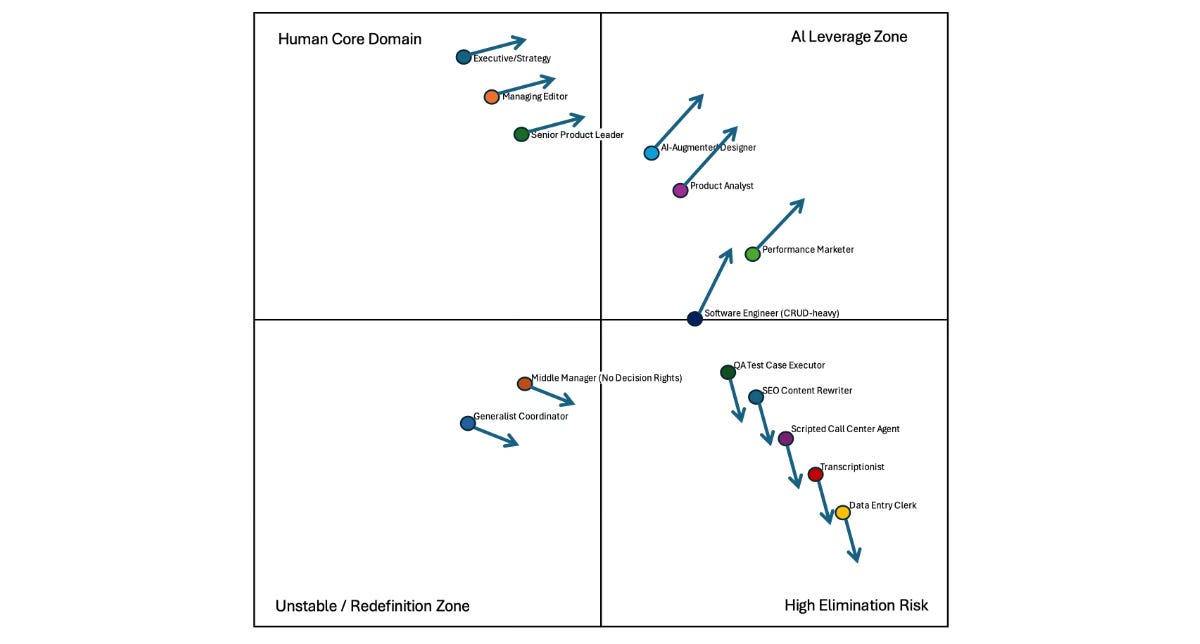

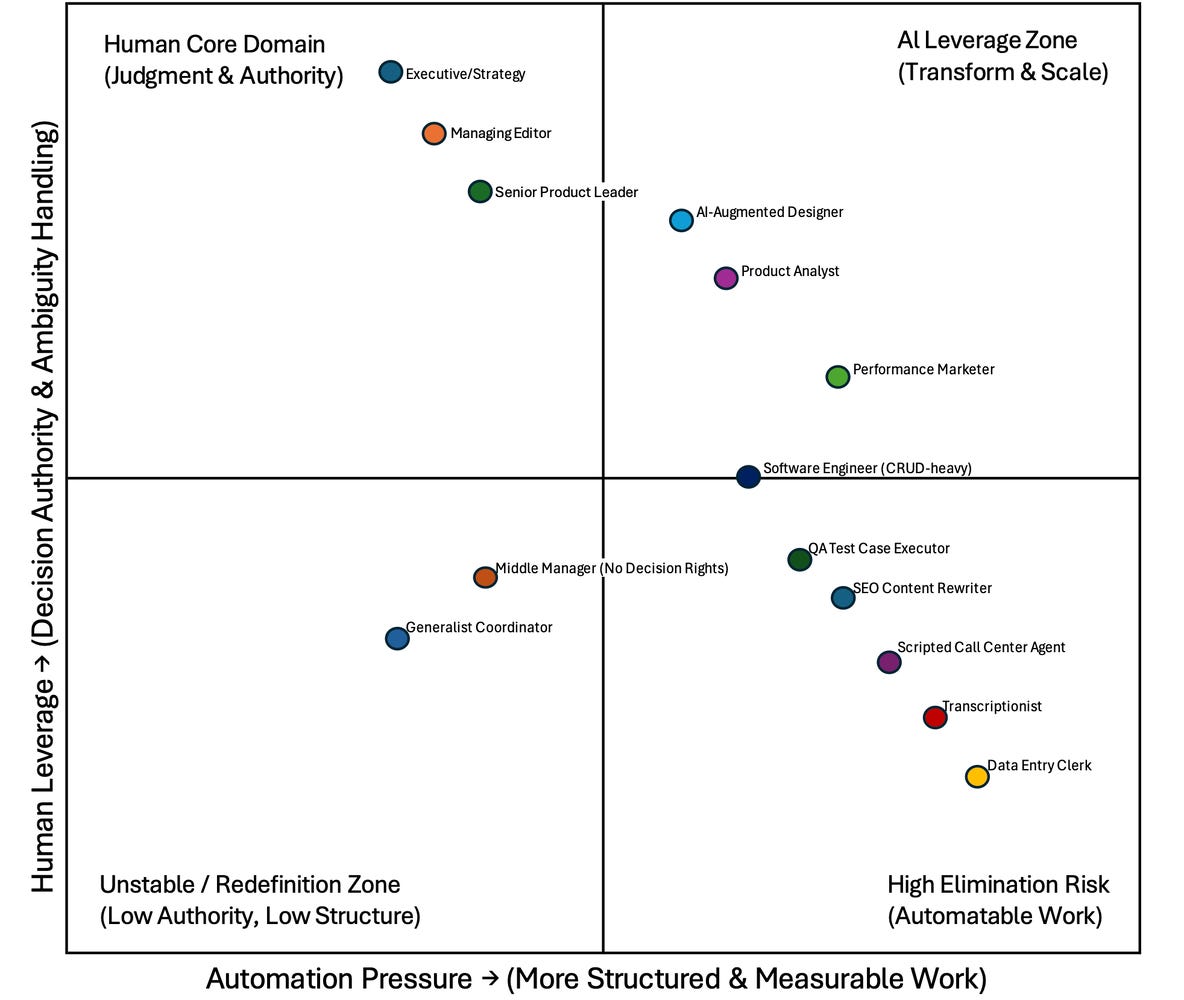

If we look beyond the headlines and think more broadly, we can map job roles using two simple factors.

The first factor is automation pressure, shown on the horizontal axis. This measures how structured and easy to measure the work is. If a task can be clearly defined, checked, and repeated, it can eventually be automated.

The second factor is human leverage, shown on the vertical axis. This shows how much decision-making, handling of uncertainty, and responsibility a role has. Jobs high on this axis do more than just complete tasks. They make choices, work with incomplete information, and face real consequences.

When we look at roles using these two factors, things become clearer.

In the bottom-right area, we find data entry clerks, scripted support jobs, and other routine roles. These jobs are in very structured settings and have little authority. It is not about intelligence. These roles just face the most automation pressure. As AI gets better, the value of repetitive work goes down.

In the top-left area, there are strategy leaders, senior product managers, and managing editors — people whose jobs mix uncertainty with decision-making power. These roles are not immune to AI, but they are harder to replace. AI can help with analysis, drafts, and simulations, but it cannot take on full responsibility or handle the politics of big decisions.

The most interesting area is the top-right, called the AI Leverage Zone. Here, we see product analysts, designers who use AI, and engineers who go beyond routine work. These jobs are structured but also have real influence. As AI gets better, these roles do not go away. Instead, they grow and use AI as a tool, rather than competing with it.

This is the key change. AI does not reward just intelligence. It rewards leverage. If your job is mostly about following set steps, AI will compete with you. If your job is about making decisions and setting direction, AI will help you do more.

This is a very different story from ‘AI versus humans.’ It is really ‘AI versus low-leverage work.’

Role Movement Under Increasing AI Capability

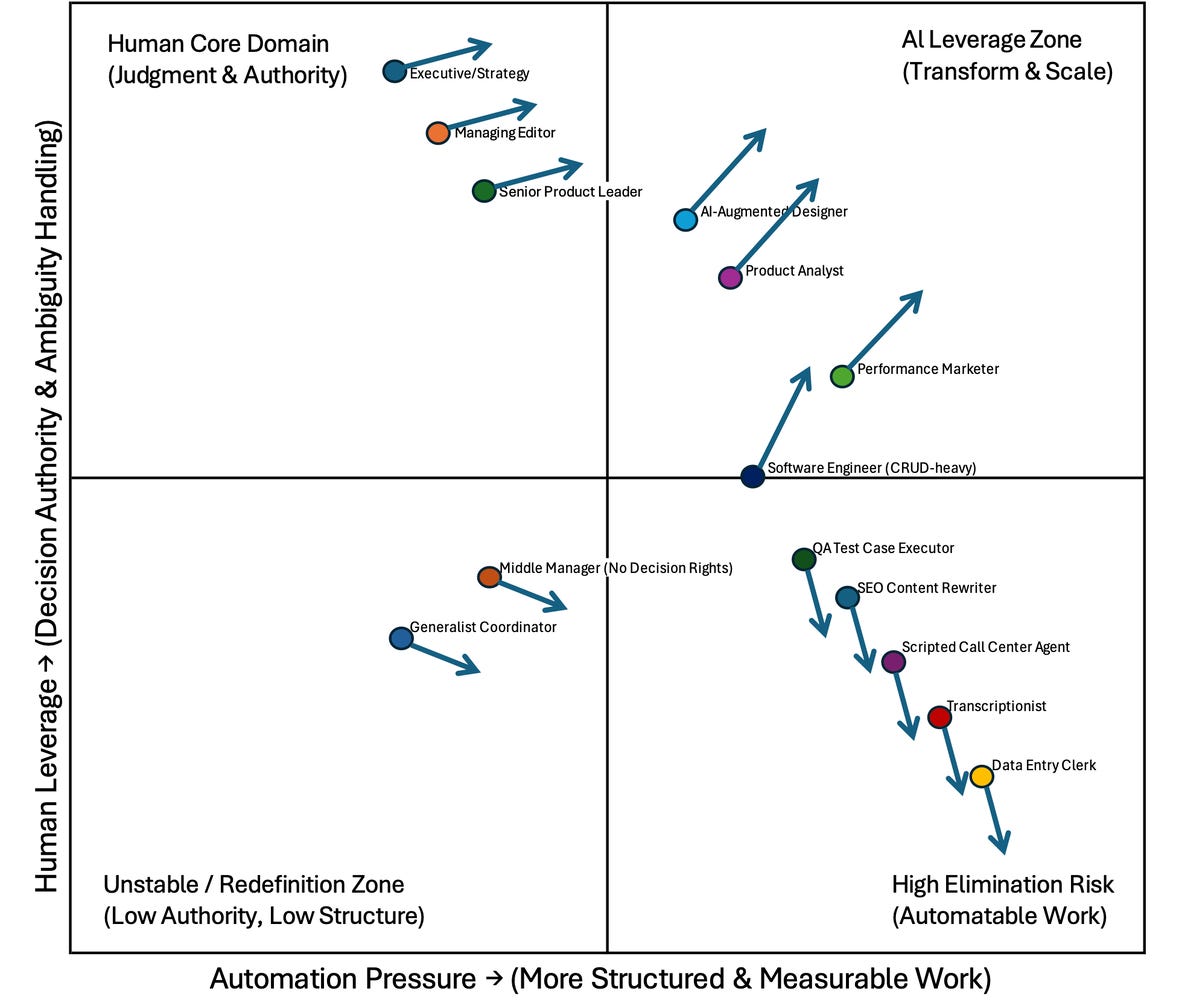

Now we reach the more complex and sometimes uncomfortable part. Job roles are not fixed points on a chart. They change over time.

As AI gets better, routine jobs tend to move lower and further right on the chart. These roles lose authority and face more automation pressure. This is not about right or wrong — it is about economics. When machines can do the same work at scale, the value of repetitive tasks drops.

Middle roles that mostly coordinate or pass along information, but lack real decision-making power, are also at risk. If a job is mainly about moving information between people or systems, AI will start to take over that work. Unless these roles gain more authority or strategic value, they will lose ground.

At the same time, roles that use AI effectively tend to move up. Engineers who build AI into systems become more influential. Analysts who manage AI models, instead of just making spreadsheets, become more valuable. Designers who guide AI tools, rather than creating everything by hand, increase both their output and their importance.

Even the top-left area shifts a bit to the right over time. Leaders who ignore AI risk losing their influence. Authority without technical skills slowly becomes just a title. The leaders who last will use AI in their decisions but still rely on their own judgment.

At this stage, a troubling pattern shows up. In the diagram, arrows in the top half mostly point upward, while those in the bottom half point downward. It seems like a story of growing inequality, with the successful gaining even more.

But this outcome depends on whether people and organizations can move up.

If people and organizations make it possible to move up, if structured roles gain AI skills and more decision-making power, then this model shows progress, not division. But if there is little mobility, then inequality will grow.

AI does not get rid of all jobs at once. It first removes low-leverage, internal roles. What is left are jobs that require higher-level judgment or very basic execution.

So the real question for all of us is not whether AI is coming. It is already here.

The question is both simple and challenging: Are we moving up in leverage faster than AI is improving in capability?

That is the real competition.